RESHAPE17 | programmable skins

Project Description

Designers

Inter_Skin // the skin as interface

The project “Inter_Skin // the skin as interface”, refers to a wearable device that helps the user to navigate himself through the city, providing a whole new augmented experience of the cityscape.

THE IDEA

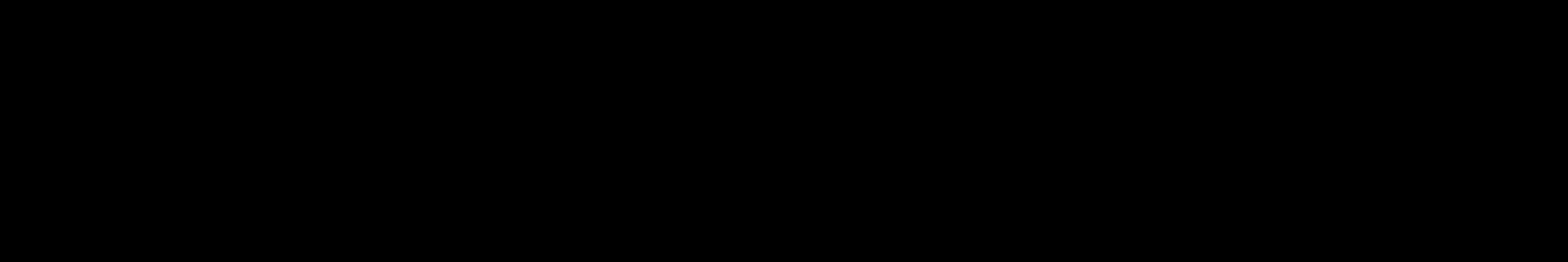

The initial stimulus has been to explore the experience of the subject within the urban fabric, through their sensorium domain. Vision as the main perceptual sensorial state has led the other four to atrophy, especially regarding the perception of the built environment. The sound triggers provided in an urban environment are often underestimated and the soundscape is more likely perceived as noise. However, sound means dissemination of energy and the conversion of energy can produce information. The present project attempts to perceive the soundscape as a different transcript, not as a lack, but as a surplus, that would generate new useful informational data. This information, inherent to the sound, along with other visual and social data existing into an urban environment, is intended to be canalized back to the user as a new sensorial expression.

This mediation of the information aims to be expressed in a new interface or materiality, towards the direction of the non- yet. The tangible outcome of this idea is a wearable device, a second skin, connected to the cloud of information that floats through the city and allows the interaction of data and user. The skin operates as the medium for exchange of bi-directional information between the city and the user, helping the latter to navigate himself through the urban space.

Each part of the interface, says Alexander Galloway, has its role to play. But what else flows from this? The existence of the internal interface within the medium is important because it indicates the implicit presence of the outside within the inside. And, again to be unambiguous “outside” means something quite specific: The Social.

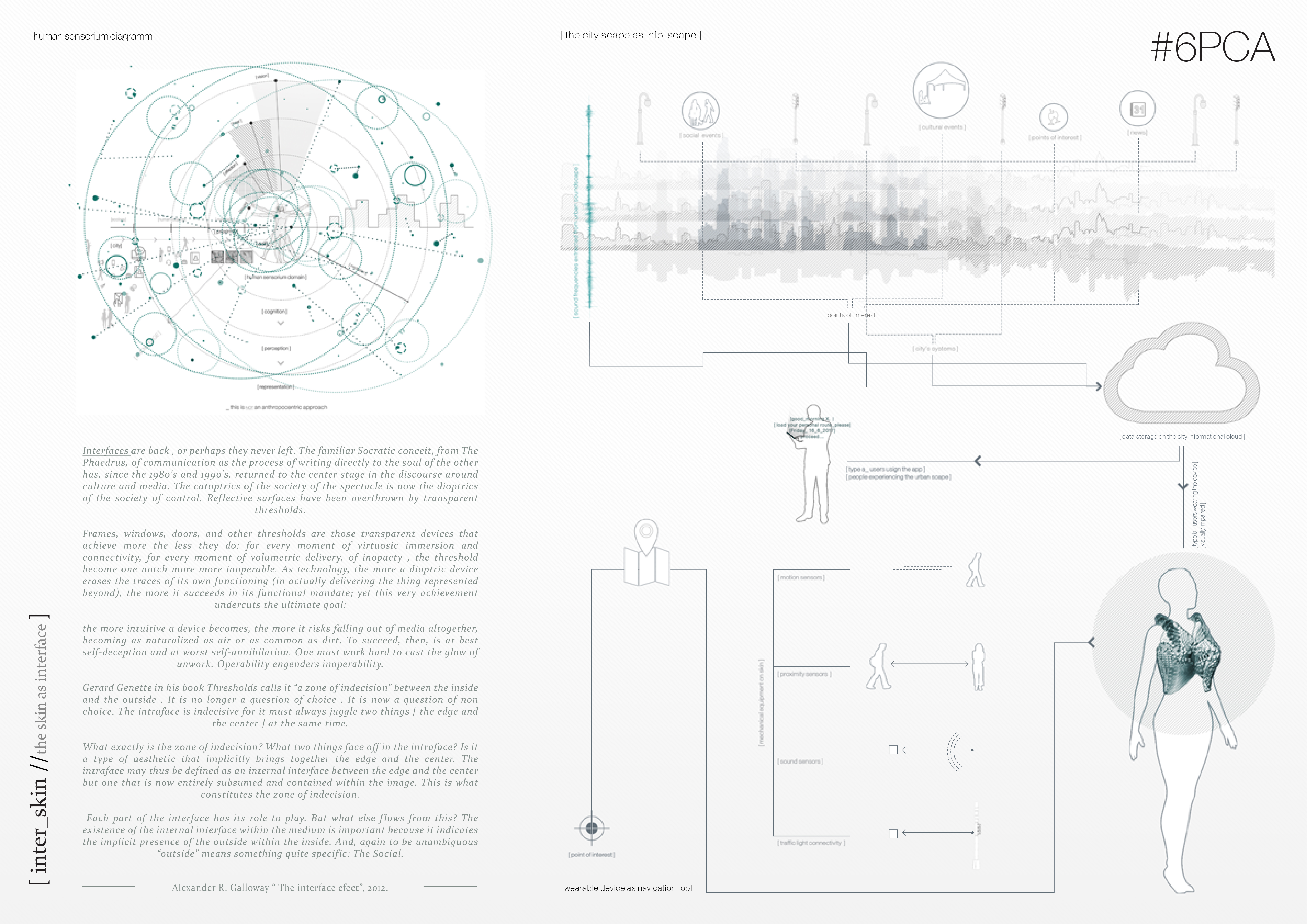

THE DESIGN

The wearable device expresses the ultimate level of engagement a user could have with the city. It could be used, either by flaneures who wish to explore the city scape in an augmented way, or by visually impaired people who are in need of help for navigating through the city. Signals extracted from the cloud of information or the sensors placed onto the device are being translated into sound and vibration output to the subject, contributing to their navigation, by pointing out possible obstacles, routes or points of interest.

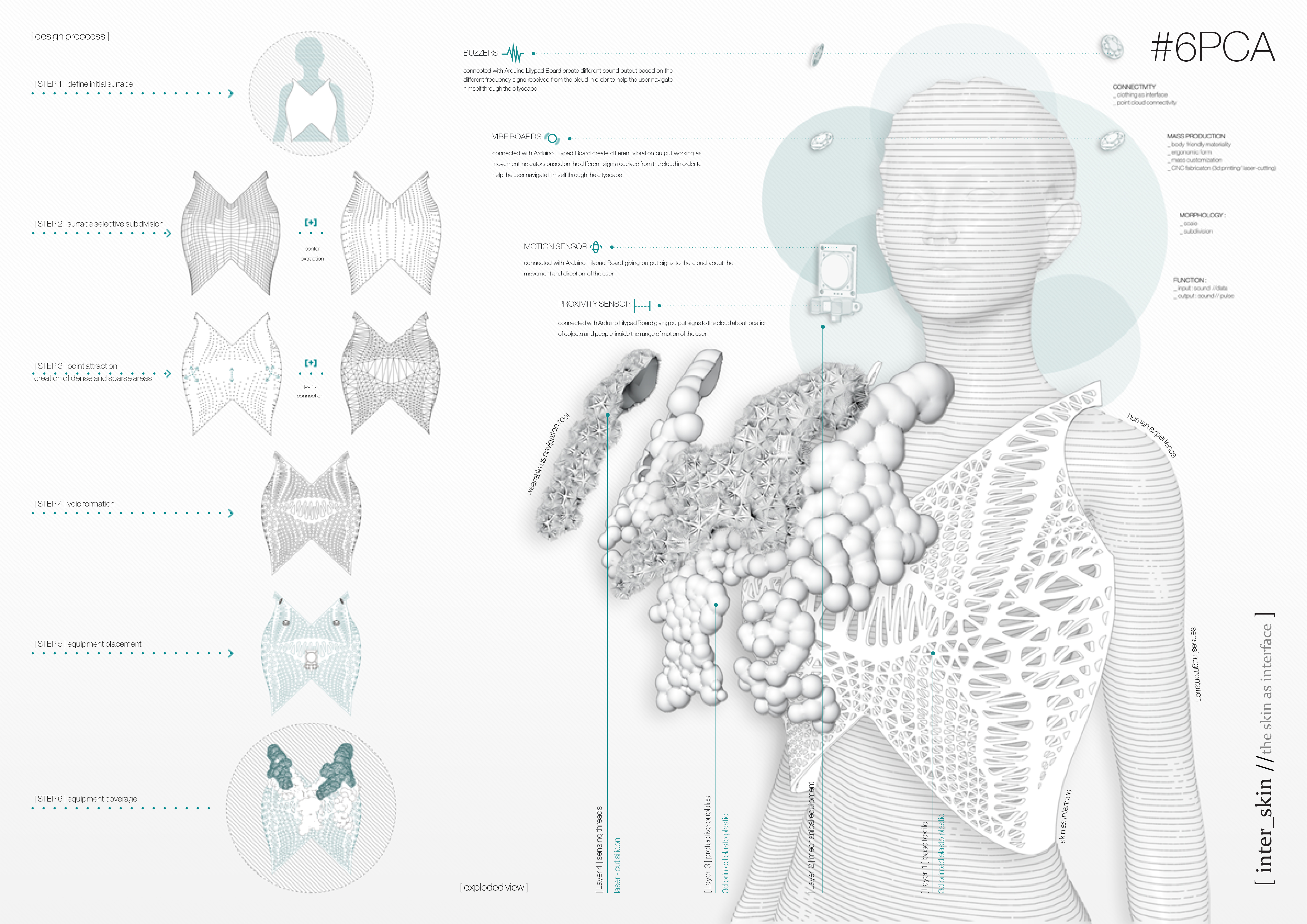

The device is composed of 4 layers: The first layer of the base textile, a 3d-printed elasto plastic vest, which acts as the skeleton. The second layer of the mechanical equipment that includes an Arduino Lilypad Board, a battery and Wi-Fi and Bluetooth antennas at the back side, and two Buzzers, two Vibe Boards, a Motion sensor and a Proximity sensor at the front side. The third layer that indicates and encloses the mechanical parts, this of the 3d-printed protective blobs. And the fourth refined layer of fibers, that expresses vividly the sensing output by shaking lightly with the body movement.

The CNC technology allows full customization of the wearable device, so every user could order his device through an online store, with his own unique body proportions. Also a smartphone app, is used to connect each device with the cloud of information, and to upload to the cloud user’s data, as state, interests etc.

FABRICATION

Due to limited sources the prototyping process followed a number of experimentations regarding the representation of the wearable. The final prototype consists of 28 pieces of 3d printed PLA, using a 3D SYSTEMS Cube Printer, and 9 pieces of laser cut leather, using an Epilog Fusion M2 Laser, in order to lighten the wearable where there was no need of extra support. The pieces were joined with transparent thread.

Spyridoula Steliou

Areti Damvopoulou

Spyridoula Steliou